Industry is constantly on the lookout for state-of-the-art innovative technologies that can provide that edge to its product or service. While premier academic institutions typically churn out newer research in their labs, they often miss the exact focus required by industry. To plug this very disconnect, interactions between the two were kickstarted a few years ago. Aptly named Confluence, it brought to the table top brass across various domains of industry led by industry veteran and Founder-CEO of Gramener, a data-visualization startup, J. Ramachandran and faculty members of various labs from IIIT-H, led by Prof. Vasudeva Varma, who is also CEO of CIE. “We were in the search of industry-enabling and consumable solutions. This industry-institute connect is what Vasu and I have demonstrated over the last 4 months,” says Ramachandran.

Content Authentication

In a bid to come up with offerings tailor-made for industry, the Information Retrieval and Extraction Lab (IREL) was chosen on a pilot basis.“We decided to focus on those solutions that are very relevant to industry and investigated on how to transform them into viable and commercializable products”, explains Prof. Varma. That’s how the group narrowed down on three or four technology areas in need of solutions that lab had developed. One such is an authentication solution. First, from an academic perspective, they looked at how and where content authentication solutions are useful by determining the size of the market potential and then developed specific demos to bring home the power of technology. Ram was impressed by the content processing capabilities of IREL, using machine learning and natural language processing that could be used in fake news detection. “We deliberately reduced the footprint to one lab so that we could develop specific demos before scaling it up for industry,” he elaborates.

Fake-o-Meter

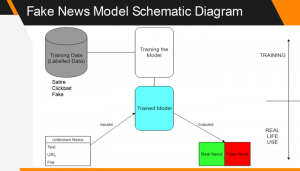

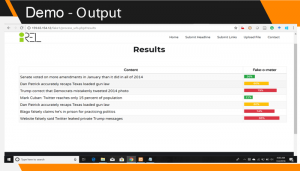

Not common or prevalent a few years ago, the term “fake news” earned its infamous spot as 2017’s word of the year. To see how pervasive it has become now, one doesn’t have to look too far. “There’s content out there with an intentionally wrong motive, technically labelled as disinformation as well as misinformation, which is just wrong content,” explains Prof. Varma. To differentiate between authentic information vs. the fake, the team created a tool known as a “fake-o-meter”. It is a web engine where one can copy the tweet or headline of a news story and submit to get a colour-coded response in percentage terms on authenticity.Lower percentages are coded green indicating the news has low probability of being fake, higher percentages are coded with amber/red indicating high degree of fakeness. Using a combination of machine learning algorithms, and sophisticated natural language processing, the tool looks at two things – the actual content itself and the manner in which the content is being propagated. Previous research has already demonstrated that typically fake news is more likely to use language that is subjective, emotional and hyperbolic. Even in terms of dissemination, fake news spreads far more quickly and farther than true stories. “The virality of content itself is often times a sheer giveaway about its authenticity,” says Prof. Varma.

The tool works for many languages such as Spanish and Chinese and includes various forms of online content, from blogs, news stories, tweets and even genres like bizarre news, hate speech (hate-o-meter), click-baits and so on.

Uniqueness

Uniqueness

While the focus the world over has been on discovering solutions to differentiate real from fake information found online, the research solution from the IREL is unique in the natural language processing focus that it brings. “This lab is one of the very few ones in the world that focuses on this research”, says Prof. Vasu. Tailor-made for various domains are solutions for fake news detection in the realm of celebrity news, health news, politics and so on. Existing and other verification efforts to sift the fake from the real are manual in nature. However time constraints and lack of domain expertise severely hamper efforts. Hence automated solutions is the way ahead. Asked if they will replace or be as good as human efforts, Prof. Vasu replies in the negative. “Not right now. But can it help an editor who would otherwise process 1-2 stories a day, process about 50 stories a day? Yes. A 50-fold productivity enhancement is being brought about by these tools and techniques. That’s the value we’re bringing,” affirms Prof. Vasu.

The Approach

For the team, putting the human in the centre of the industry-institute interaction assumes significance. “Our approach is not unilateral by any means. Every step of the way, we’ve been reaching out to industry for validation. At the same time, research also matters. Here is where the true confluence is in action,” says Ram. Terming the Fake-o-meter as an attempt to create a common mental model between people, Ram continues saying that it is just one aspect of creating information-enablement and consumption in an authentic way. While the brainstorming sessions as part of Confluence have since ceased, the validation with industry is an ongoing process. The team is currently working on other viable solutions such as Summarization and Cross-Purposing solutions, both of which are expected to be disruptive in industry. According to Ram, “Common mental models between people, whether it is for Information consumption, or authentication and summarization — all this helps people understand each other, hence productivity goes up in every human enterprise. And overall humanity gains.”

Sarita Chebbi is a compulsive early riser. Devourer of all news. Kettlebell enthusiast. Nit-picker of the written word especially when it’s not her own.

Next post