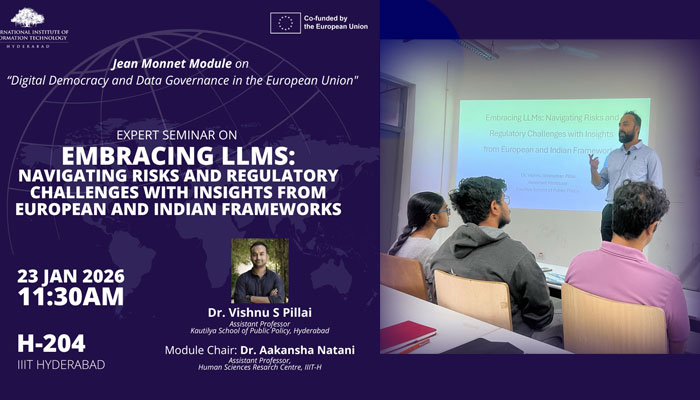

Dr. Vishnu Sivarudran Pillai from the Kautilya School of Public Policy gave a talk on Regulatory Challenges of Large Language Models on 23 January. The seminar was organised under the Jean Monnet Module Grant Project on “Digital Democracy and Data Governance in the EU.”

In his talk, Dr. Pillai examined the regulatory challenges arising from the rapid advancement of Large Language Models (LLMs). Moving beyond abstract concerns around technological “singularity,” the session focused on the practical difficulties faced by policymakers in designing and enforcing safeguards within an increasingly AI-driven economy.

In his talk, Dr. Pillai examined the regulatory challenges arising from the rapid advancement of Large Language Models (LLMs). Moving beyond abstract concerns around technological “singularity,” the session focused on the practical difficulties faced by policymakers in designing and enforcing safeguards within an increasingly AI-driven economy.

The seminar opened with a discussion on the transition from traditional, task-specific machine learning systems to foundational LLMs. Unlike earlier models, LLMs operate as general-purpose technologies, require vast computational resources, and remain largely opaque in their functioning. This shift, Dr. Pillai noted, significantly complicates regulatory efforts and foregrounds the Collingridge dilemma—the challenge of regulating emerging technologies at an early stage, when their broader societal implications, including bias, privacy risks, and information asymmetries, are not yet fully understood.

Dr. Pillai then compared global approaches to AI governance. The European Union’s precautionary framework, reflected in the EU AI Act, emphasises harm prevention through ex-ante regulation, prescriptive compliance requirements, detailed technical documentation, and stringent penalties. In contrast, India’s approach is more adaptive and evidence-based. Anchored in the SUTRA principles of Trust, Fairness, Transparency, and Innovation, and reflected in the Digital Personal Data Protection (DPDP) Act, 2023 and the proposed Digital India Act, this model seeks to balance regulatory oversight with the need to sustain innovation through iterative policy refinement.

The seminar concluded with an emphasis on what Dr. Pillai termed “intelligent regulation.” Such an approach, he argued, must carefully navigate trade-offs around transparency, protect fundamental rights, enable civil liability, and remain cognisant of technical and innovation constraints. Recognising that the rapid proliferation of AI technologies is inevitable, the session highlighted the need for risk-based and application-specific regulatory frameworks that ensure accountability while fostering a resilient and innovative digital ecosystem.

January 2026