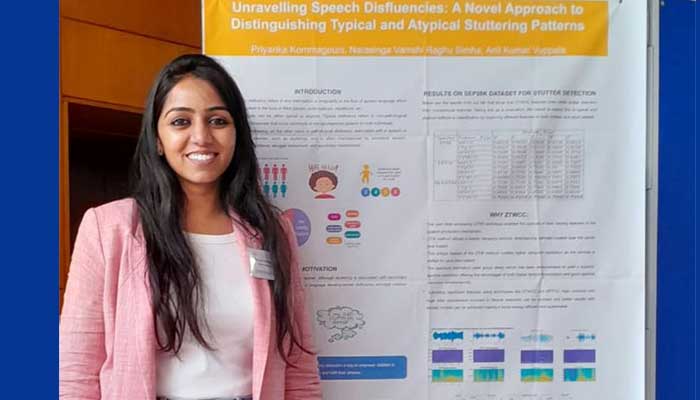

Priyanka Kommagoun working under Prof. Anil Kumar Vuppala, from the Speech Processing Lab, LTRC won the best poster award in Young Female Researchers in Speech Science and Technology Workshop (YFRSW-2023) as part of INTERSPEECH 2023 held in Dublin, Ireland on 19 August. The poster was on research work done on Unravelling Speech Disfluencies: A Novel Approach to Distinguishing Typical and Atypical Stuttering Patterns by Priyanka Kommagouni, Narasinga Vamshi Raghu Simha, Anil Kumar Vuppala. Here is the summary of the research work as explained by the authors:

Speech disfluency refers to any interruption or irregularity in the flow of spoken language which can manifest in the form of filled pauses, prolongations, repetitions, etc. Disfluencies can be either typical or atypical. Typical disfluency refers to non-pathological speech disfluencies that occur commonly in the spontaneous speech of most individuals. Atypical disfluency, on the other hand, is pathological disfluency associated with a speech or language disorder, such as stuttering, and is often characterised by excessive tension, speaking avoidance, struggle behaviours, and secondary mannerisms. In the literature, despite a considerable amount of research dedicated to detecting and analysing disfluencies, there has been limited investigation into classifying typical and atypical disfluencies in both adult and paediatric populations. Differentiating disfluencies is critical, especially for preschool children, as failing to intervene in time can increase the risk of continued stuttering and can negatively impact a child’s development. However, developing a model for classifying disfluency in children is challenging due to shorter durations, lower intensities, and reduced variability in stuttering compared to adult speech. Our future work focuses on disfluency classification in both adults and children. In this contribution, we collected spontaneous and read speech data, in Telugu, from school children aged 5-12, including both typical and atypical disfluency. Our objective is to examine the feature space of typical and stuttering disfluencies and construct a classifier that can effectively distinguish between the two categories with high accuracy.

August 2023