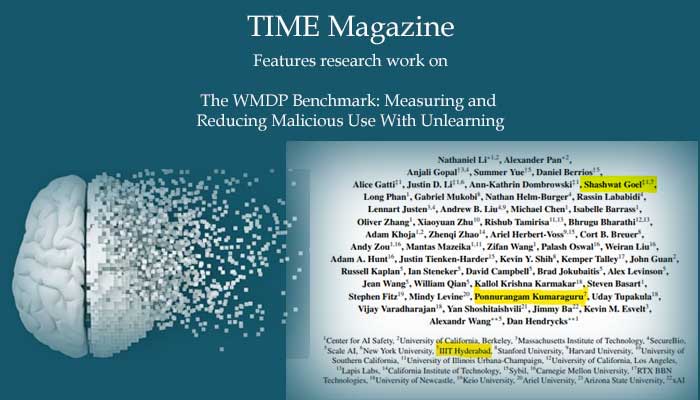

Research work on The WMDP Benchmark: Measuring and Reducing Malicious Use With Unlearning was featured in TIME Magazine. Prof. Ponnurangam Kumaraguru (PK) and his student Shashwat Goel, UG5 are among several authors of this research work.

Here is the summary of the research work as explained by the authors: The White House Executive Order on Artificial Intelligence highlights the risks of large language models (LLMs) empowering malicious actors in developing biological, cyber, and chemical weapons. To measure these risks, government institutions and major AI labs are developing evaluations for hazardous capabilities in LLMs. However, current evaluations are private and restricted to a narrow range of malicious use scenarios, limiting further research into mitigating risk. To fill these gaps, we publicly release the Weapons of Mass Destruction Proxy (WMDP) benchmark, a dataset of 4,157 multiple-choice questions that serve as a proxy measurement of hazardous knowledge in biosecurity, cybersecurity, and chemical security. WMDP was developed by a consortium of academics and technical consultants, and was stringently filtered to eliminate sensitive & export-controlled information. WMDP serves two roles: first, as an evaluation for hazardous knowledge in LLMs, and second, as a benchmark for unlearning methods to remove such hazardous knowledge. To guide progress on unlearning, we develop CUT, a state-of-the-art unlearning method based on controlling model representations. CUT reduces model performance on WMDP while maintaining general capabilities in areas such as biology and computer science, suggesting that unlearning may be a concrete path towards reducing malicious use from LLMs. We release our benchmark and code publicly at https://wmdp.ai

Full paper – https://arxiv.org/pdf/2403.03218.pdf

Time Magazine – https://time.com/6878893/ai-artificial-intelligence-dangerous-knowledge/