Mounika Kamsali Veera’s award-winning poster showed how deep neural networks can automatically identify the language from a short duration of speech signal uttered by an unknown speaker.

Interspeech is the world’s largest and most comprehensive annual conference on the science and technology of spoken language processing. Interspeech conferences emphasize interdisciplinary approaches addressing all aspects of speech science and technology: fundamental theories, advanced applications including computational modeling and technology development inspired by recent advances in artificial intelligence (AI) and machine learning (ML).According to the organizers of the conference, speech processing is not only at the heart of multimodal AI and ML, but advanced ML tools were first conceived and successfully deployed towards building speech-based applications.

Aptly fitting InterSpeech 2018’s theme on “Speech Research for Emerging Markets in MultiLingual Societies” was its venue: India, Hyderabad. Communication (especially the spoken kind) among Indians, is unique and challenging for scientific exploration owing to our country’s large diversity in language, culture, food habits, and so on. Another factor driving the choice of venue has been the ever-expanding market and consequent demand for businesses dependent on the internet. The IT-enabled services face the challenge of handling conversations in multiple languages. The large speech research community in India is already addressing these issues with generous support from the federal and state governments and from the industry. Apart from distinguished speakers at the conference which was held in September, there were various satellite events and workshops associated with it such as summer school on speech production, workshop on machine learning in speech and language processing, and so on.

Indian Language Identification

Mounika Veera, who is a Lateral Entry candidate of the dual-degree programme in Electronics at IIIT-H, first presented her work on “Indian Language Identification Using Deep Neural Network Architectures” during InterSpeech 2016 at San Francisco. She says that language identification has many potential uses such as: emergency situations requiring dialling of a rapid response number, like 911 in the US (people in stressed conditions will tend to speak in their native tongue, even if they have some knowledge of the local language); travel services (arranging for flight and hotel reservations); communications related applications (translation services,information services, customer care services). For her research, she used 5-second speech samples but went beyond the standard datasets that are made typically available. “I went to NIT Warangal and HCU where I could find people coming from different states. In this way, I got a chance to collect some real-time language data,” says Mounika. What differentiates her work from the existing spoken language identification is that she used an attention-mechanism based deep neural networks (DNN) architecture as opposed to a frame-based one. “The algorithm not only takes into consideration code-switching such as Hinglish, or other slang but also the “hmmm” sounds that one creates while pausing or thinking during conversations”, explains Mounika.

Young Female Researchers in Speech Science and Technology Workshop

Among the special events that were conducted as part of InterSpeech 2018 was a panel discussion on Women in Science and a Workshop for Young Female Researchers in Speech Science and Technology (YFSRW) 2018. This is a unique workshop that, as per its website, is designed to foster interest in research in women at the undergraduate or master level who have not yet committed to getting a PhD in speech science or technology areas, but who have had some research experience in their college and universities via individual or group projects. On September 1st this year, the day-long workshop that was held at IIIT-H featured panel discussions with senior female researchers in the field, student poster presentations and a mentoring session.

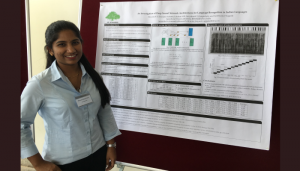

Best Poster Award

Mounika presented a poster at the YFRSW workshop bagging the first place. For her poster presentation at YFSRW, Mounika built upon the core idea of her thesis work by demonstrating how relevant features can be extracted based on information needed, She says that predominantly for speech tasks, certain features that have information relevant to the vocal tract are extracted. However to obtain (vocal) source information, Mounika extracted other features too and discovered that both types of features were complementary to each other for language identification. On being asked what made her poster stand out, Mounika says,” My work which involved research in 13 Indian languages resonated with this year’s theme of speech research in multilingual societies, And I’m also a good presenter!”

Academics and Career

Mounika currently works as an Artificial Intelligence engineer for a Santa Clara, US-based startup Huddl AI. Work here involves automating tasks such as compiling meeting notes, understanding the deadlines and keeping track of the tasks each person in a business meeting has been assigned. She explains it as something on the lines of a personal assistant that is intelligent enough to learn who is in the call, what is being discussed and noting down tasks assigned for each of the attendees. “I am personally working on the task of identifying the speakers in a given meeting scenario”, says Mounika. Tracing her first signs of inclination towards speech research to her break during 1st year B.Tech, she says, “I learned about silent sound technology at that time. It was about converting lip movements or whispers into speech. I was so fascinated and drawn by it, I knew I wanted to continue further in this field.” Discovering the Lateral Entry programme at IIIT-H was somewhat fortuitous. Mounika was only one of the two candidates selected at the all-India level for a lateral entry to MS by Research (ECE) + BTech (ECE).

Joining the Speech and Vision lab for her master’s thesis seemed to be a given for Mounika. She credits her current success to the constant motivation of her parents as well as advisor Prof. Anil Kumar Vuppula. “Prof. Vuppula often likened research to the making of a movie. He would say that only when you are creative and weave a beautiful story, and work hard behind the scene, the audience will appreciate it. It’s not about directing 100 movies that people watch and forget, but all about that one blockbuster hit that will last in minds of the people,” she reminisces.

Family and Institute

Considering herself lucky to have such supportive parents who gave her freedom to explore opportunities and never discriminated based on gender, Mounika remarks, “My parents as well as my crazy sister believed in me and my ideas. I have led a balanced life, doing good work and having my share of fun.” When not immersed in work, Mounika can be found singing like a lark. “I can’t help it! I have a love for music and have even performed in IIIT-H,” she says. She goes on to add that being from a reputed institute like IIIT-H has made a great difference. “It has been the bridge between me and the outer world. It’s not just about giving me opportunities but also the confidence to articulate and present my work.”

Next post